New research warns it will be almost impossible for African cropland to feed the continent by 2050 without massive changes to farming.

The prospect that Africa’s harvests will be enough to feed all its people by mid-century is remote unless it can make huge improvements in farming on its existing cropland, a new report says.

The authors say the improvements needed will amount to “a large, abrupt acceleration in the rate of yield increase”.

If the continent seeks instead to close the gap between food production and people’s needs by cultivating new areas, they say, this will cause serious damage to wildlife, and higher emissions of greenhouse gases.

They say ways for Africa to avoid this require it to match North American and European standards of agricultural efficiency − a dauntingly difficult task implying an improvement of 60% in the next 30 years − or to find the money to pay for grain imports.

They suggest a different answer to help to close the gap: not just more efficient but also more intensive agriculture in already-farmed areas.

The latest study simply reinforces growing concern that Africa faces a very hungry future from extreme weather through the direct impacts of climate change, or the sheer speed of its onset, or because of population growth – or from a perfect storm of all these threats together.

Read more at African Cropland Heading for Food Deficit

News related to climate change aggregated daily by David Landskov. Link to original article is at bottom of post.

Saturday, December 31, 2016

Friday, December 30, 2016

Flood Threats Changing Across US

University of Iowa study finds flood risk growing in the North, declining in the South

The risk of flooding in the United States is changing regionally, and the reasons could be shifting rainfall patterns and the amount of water in the ground.

In a new study, University of Iowa engineers determined that, in general, the threat of flooding is growing in the northern half of the U.S. and declining in the southern half. The American Southwest and West, meanwhile, are experiencing decreasing flood risk.

UI engineers Gabriele Villarini and Louise Slater compiled water-height information between 1985 and 2015 from 2,042 stream gauges operated by the U.S. Geological Survey. They then compared the data to satellite information gathered over more than a dozen years by NASA's Gravity Recovery and Climate Experiment (GRACE) mission showing "basin wetness," or the amount of water stored in the ground.

What they found was the northern sections of the country, generally, have an increased amount of water stored in the ground, and thus are at greater risk for minor and moderate flooding, two flood categories used by the National Weather Service. Meanwhile, minor to moderate flood risk was decreasing in the southern portions of the U.S., where stored water has declined. (See the above map.)

Not surprisingly, the NASA data showed decreased stored water--and reduced flood risk--in the Southwest and western U.S., in large part due to the prolonged drought gripping those regions.

Read more at Flood Threats Changing Across US

The risk of flooding in the United States is changing regionally, and the reasons could be shifting rainfall patterns and the amount of water in the ground.

In a new study, University of Iowa engineers determined that, in general, the threat of flooding is growing in the northern half of the U.S. and declining in the southern half. The American Southwest and West, meanwhile, are experiencing decreasing flood risk.

UI engineers Gabriele Villarini and Louise Slater compiled water-height information between 1985 and 2015 from 2,042 stream gauges operated by the U.S. Geological Survey. They then compared the data to satellite information gathered over more than a dozen years by NASA's Gravity Recovery and Climate Experiment (GRACE) mission showing "basin wetness," or the amount of water stored in the ground.

What they found was the northern sections of the country, generally, have an increased amount of water stored in the ground, and thus are at greater risk for minor and moderate flooding, two flood categories used by the National Weather Service. Meanwhile, minor to moderate flood risk was decreasing in the southern portions of the U.S., where stored water has declined. (See the above map.)

Not surprisingly, the NASA data showed decreased stored water--and reduced flood risk--in the Southwest and western U.S., in large part due to the prolonged drought gripping those regions.

Read more at Flood Threats Changing Across US

2017: Trump Peddles Climate Doubt in a World Sold on Action

The president-elect can surround himself with climate deniers, but he will govern in a world where climate change affects almost all international dealings.

President-elect Donald Trump may dismiss the Paris Agreement and pack his cabinet with climate deniers, but once he takes office, he will face a world that takes the climate crisis as seriously as he does not.

He will enter a complex web of diplomatic relations, where issues like trade, finance, migration, security, poverty, food aid and disaster relief are all intertwined and all have important links to the climate agenda. It's a world already dealing with significant climate impacts and sold on climate action.

"I am struck by the shift over the last few years in how the global community puts climate change on its agenda," Jonathan Pershing, President Obama's special envoy on climate, told InsideClimate News. "It is now virtually everywhere."

Since the signing of the Paris Agreement a year ago, addressing climate change has remained a major imperative for most of the world's nations. Enough countries quickly ratified the accord so that it entered into force early, in November. Shortly after Trump's surprising election, delegates from virtually every country in the world gathered in Marrakech to start putting the Paris treaty immediately into action.

Most countries also signed on to two other agreements this fall: one to reduce potent greenhouse gases used in refrigeration and another to cap emissions for the aviation industry.

Whatever the U.S. does under Trump, other countries "will move whether or not we are moving forward," Pershing predicted.

"It's already built into decisions that are being made," said Timothy Wirth, vice chair of the United Nations Foundation, a former Colorado Senator and a top climate negotiator for former President Clinton. "To try to fight an uphill, rearguard action against the realities of science and climate seems to be a very worthless political exercise. They'd get nothing out it."

Nathaniel Keohane, climate vice president at the Environmental Defense Fund, said that U.S. climate action could be a litmus test to gauge how far the Trump administration is willing to go in other realms of cooperation. It may even become a prime bargaining chip.

"What a country does in terms of its climate commitments is taken as a signal of how it's going to act on its other commitments," he said. For other countries, "how they engage, how willing they are to align themselves with some of the U.S. asks and U.S. priorities," will be based partly on how America behaves on the climate front, he said.

"We would lose a seat at the table," said David Wirth, a law professor at Boston College and a former legal adviser to the State Department. "We would lose leverage. We would create an immense amount of ill-will having taken on these obligations and now saying we are backing off for no obvious benefit."

2017: Trump Peddles Climate Doubt in a World Sold on Action

President-elect Donald Trump may dismiss the Paris Agreement and pack his cabinet with climate deniers, but once he takes office, he will face a world that takes the climate crisis as seriously as he does not.

He will enter a complex web of diplomatic relations, where issues like trade, finance, migration, security, poverty, food aid and disaster relief are all intertwined and all have important links to the climate agenda. It's a world already dealing with significant climate impacts and sold on climate action.

"I am struck by the shift over the last few years in how the global community puts climate change on its agenda," Jonathan Pershing, President Obama's special envoy on climate, told InsideClimate News. "It is now virtually everywhere."

Since the signing of the Paris Agreement a year ago, addressing climate change has remained a major imperative for most of the world's nations. Enough countries quickly ratified the accord so that it entered into force early, in November. Shortly after Trump's surprising election, delegates from virtually every country in the world gathered in Marrakech to start putting the Paris treaty immediately into action.

Most countries also signed on to two other agreements this fall: one to reduce potent greenhouse gases used in refrigeration and another to cap emissions for the aviation industry.

Whatever the U.S. does under Trump, other countries "will move whether or not we are moving forward," Pershing predicted.

"It's already built into decisions that are being made," said Timothy Wirth, vice chair of the United Nations Foundation, a former Colorado Senator and a top climate negotiator for former President Clinton. "To try to fight an uphill, rearguard action against the realities of science and climate seems to be a very worthless political exercise. They'd get nothing out it."

Nathaniel Keohane, climate vice president at the Environmental Defense Fund, said that U.S. climate action could be a litmus test to gauge how far the Trump administration is willing to go in other realms of cooperation. It may even become a prime bargaining chip.

"What a country does in terms of its climate commitments is taken as a signal of how it's going to act on its other commitments," he said. For other countries, "how they engage, how willing they are to align themselves with some of the U.S. asks and U.S. priorities," will be based partly on how America behaves on the climate front, he said.

"We would lose a seat at the table," said David Wirth, a law professor at Boston College and a former legal adviser to the State Department. "We would lose leverage. We would create an immense amount of ill-will having taken on these obligations and now saying we are backing off for no obvious benefit."

2017: Trump Peddles Climate Doubt in a World Sold on Action

Heat Is On for 2017, Just Not Record-Setting

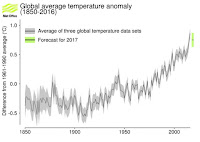

2016 is about to cap off the hottest year on record for the third straight year, a remarkable streak fueled primarily by the excess heat trapped in Earth’s atmosphere by ever-rising levels of greenhouse gases.

While that streak is expected to end, in part because of the demise of one of the strongest El Niños on record, 2017 is still expected to be among the hottest years in more than 130 years of record keeping, according to a forecast from the U.K. Met Office.

Because of global warming, “each new year is basically predestined to be among the warmest on record,” Deke Arndt, chief of the monitoring branch of the U.S. National Centers for Environmental Information, said in an email.

Because of global warming, 16 of the 17 hottest years on record have occurred this century, the only exception being the strong El Niño year of 1998.

Each year, the Met Office uses climate models to forecast the global annual average temperature for the coming decade, in an effort to improve shorter-term climate forecasting of features like hurricane season activity and droughts.

Forecasters expect 2017’s temperature to fall between 1.13°F (0.63 °C) and 1.57°F (0.87 °C) above the 1961-1990 average.

Read more at Heat Is On for 2017, Just Not Record-Setting

Thursday, December 29, 2016

Has the OPEC Rally Gone Too Far?

Oil prices continue to edge up as 2016 comes to a close, a dramatic turnaround for the industry compared to the start of the year.

In January, oil prices were melting down, dropping below $30 per barrel. The industry was panicking, slashing spending and jobs, and it was hard to see any evidence of a rebound. By December, things look much different. The industry is adding rigs back to the shale patch, oil prices are rising, the market is moving closer to balance, and the OPEC deal could accelerate that adjustment. The consensus is that oil prices will post further gains in 2017.

But even as WTI trades above $54 per barrel – the highest price since the summer of 2015 – there are several reasons why oil should be trading much lower.

Read more at Has the OPEC Rally Gone Too Far?

In January, oil prices were melting down, dropping below $30 per barrel. The industry was panicking, slashing spending and jobs, and it was hard to see any evidence of a rebound. By December, things look much different. The industry is adding rigs back to the shale patch, oil prices are rising, the market is moving closer to balance, and the OPEC deal could accelerate that adjustment. The consensus is that oil prices will post further gains in 2017.

But even as WTI trades above $54 per barrel – the highest price since the summer of 2015 – there are several reasons why oil should be trading much lower.

Read more at Has the OPEC Rally Gone Too Far?

2016: Canada's Oil Sands Downturn Hints at Ominous Future

Low oil prices that caused project cancellations, as well as new climate policies, have activists seeing the beginning of the end in Canada's oil patch.

It was a dark year for Canada's tar sands.

Plunging oil prices caused companies to cancel or delay nearly three dozen projects. Extensive wildfires forced producers to shut down operations for weeks. And after a decade that saw little action on climate change policy, Canadian officials began shaping plans to cap the tar sands' emissions and set a national price on carbon with an eye to meeting the country's commitment to the Paris climate agreement.

Now the question is whether the downturn is just a blip or is the start of a trend away from the expensive and carbon-intensive fuel.

If enacted, the policies would implement the most stringent limits on the industry to date and send a signal that energy companies must balance development of the oil sands with climate action.

"This has been one of the most difficult years for us," said Ben Brunnen, vice president of oil sands for the Canadian Association of Petroleum Producers. "In addition to the investment climate, we are seeing some pretty substantial federal and provincial policies."

Since oil prices tanked in 2014, energy companies have delayed or canceled at least 64 projects in Alberta's oil sands, according to data from JWN Energy. Across the industry, producers have slashed billions of dollars from their balance sheets, with the latest a December divestment of oil sands assets by Norway's Statoil.

In October, ExxonMobil said it may wipe 3.6 billion barrels of tar sands oil from its reported reserves early next year in its annual filing to the Securities and Exchange Commission. The announcement came amid investigations by state attorneys general and reportedly the SEC into whether the company misled investors about the business risks of climate change and the value of its holdings. Reserves measure the amount of oil that a company can profitably extract, and Exxon had long resisted calls to reduce its reserves figure as oil prices fell.

Read more at 2016: Canada's Oil Sands Downturn Hints at Ominous Future

It was a dark year for Canada's tar sands.

Plunging oil prices caused companies to cancel or delay nearly three dozen projects. Extensive wildfires forced producers to shut down operations for weeks. And after a decade that saw little action on climate change policy, Canadian officials began shaping plans to cap the tar sands' emissions and set a national price on carbon with an eye to meeting the country's commitment to the Paris climate agreement.

Now the question is whether the downturn is just a blip or is the start of a trend away from the expensive and carbon-intensive fuel.

If enacted, the policies would implement the most stringent limits on the industry to date and send a signal that energy companies must balance development of the oil sands with climate action.

"This has been one of the most difficult years for us," said Ben Brunnen, vice president of oil sands for the Canadian Association of Petroleum Producers. "In addition to the investment climate, we are seeing some pretty substantial federal and provincial policies."

Since oil prices tanked in 2014, energy companies have delayed or canceled at least 64 projects in Alberta's oil sands, according to data from JWN Energy. Across the industry, producers have slashed billions of dollars from their balance sheets, with the latest a December divestment of oil sands assets by Norway's Statoil.

In October, ExxonMobil said it may wipe 3.6 billion barrels of tar sands oil from its reported reserves early next year in its annual filing to the Securities and Exchange Commission. The announcement came amid investigations by state attorneys general and reportedly the SEC into whether the company misled investors about the business risks of climate change and the value of its holdings. Reserves measure the amount of oil that a company can profitably extract, and Exxon had long resisted calls to reduce its reserves figure as oil prices fell.

Read more at 2016: Canada's Oil Sands Downturn Hints at Ominous Future

Waste Gas Flared by Oil Industry Rising, Warns World Bank

Natural gas flaring at oil sites around the world is on the up, cutting the industry’s costs but damaging the climate

In 2015, 147 billion cubic meters (bcm) of natural gas was flared at oil production sites around the world – up from 145bcm in 2014 and 141bcm in 2013.

That’s a waste of energy on a massive scale: according to the World Bank, if the gas flared was used for power generation it would be more than enough to provide the current annual electricity consumption of the whole of Africa.

Oil producers often prefer to burn off gas associated with oil extraction activities rather than invest capital in pipes and pumping stations to transport the gas to consumers.

Gas flaring is also a significant factor driving global warming. More than 16,000 gas flares at oil production sites around the world result in about 350 million tons of climate-changing CO2 being emitted into the atmosphere every year. The flares from numerous shale oil wells in the US can be seen from space.

The World Bank says flaring in northern areas of the globe is also a major source of black carbon or soot which when deposited on Arctic ice, accelerates melting.

The latest data on flaring has been released by the Global Gas Flaring Reduction Partnership, a Bank-led organisation made up of governments, oil companies and various international bodies.

“The oil and gas industry has to step up and acknowledge that it’s time to change the way they do business,” a Bank official told Climate News Network.

According to the figures – gathered by the Bank and the US National Oceanic and Atmospheric Administration (NOAA) from advanced satellite sensors – Russia is the largest gas flaring country, burning about 21 bcm annually, followed by Iraq (16bcm), Iran (12bcm), the US (12bcm) and Venezuela (9bcm).

Read more at Waste Gas Flared by Oil Industry Rising, Warns World Bank

In 2015, 147 billion cubic meters (bcm) of natural gas was flared at oil production sites around the world – up from 145bcm in 2014 and 141bcm in 2013.

That’s a waste of energy on a massive scale: according to the World Bank, if the gas flared was used for power generation it would be more than enough to provide the current annual electricity consumption of the whole of Africa.

Oil producers often prefer to burn off gas associated with oil extraction activities rather than invest capital in pipes and pumping stations to transport the gas to consumers.

Gas flaring is also a significant factor driving global warming. More than 16,000 gas flares at oil production sites around the world result in about 350 million tons of climate-changing CO2 being emitted into the atmosphere every year. The flares from numerous shale oil wells in the US can be seen from space.

The World Bank says flaring in northern areas of the globe is also a major source of black carbon or soot which when deposited on Arctic ice, accelerates melting.

The latest data on flaring has been released by the Global Gas Flaring Reduction Partnership, a Bank-led organisation made up of governments, oil companies and various international bodies.

“The oil and gas industry has to step up and acknowledge that it’s time to change the way they do business,” a Bank official told Climate News Network.

According to the figures – gathered by the Bank and the US National Oceanic and Atmospheric Administration (NOAA) from advanced satellite sensors – Russia is the largest gas flaring country, burning about 21 bcm annually, followed by Iraq (16bcm), Iran (12bcm), the US (12bcm) and Venezuela (9bcm).

Read more at Waste Gas Flared by Oil Industry Rising, Warns World Bank

The 360-Degree Rainbow - By Dr. Jeff Masters

Tropical cyclones—which include all hurricanes, typhoons, tropical storms and tropical depressions—are expected to change in intensity, frequency, location, and seasonality as a result of climate change. Many of the tropical cyclones of 2016 exhibited the type of behavior we expect to see more of due to global warming. Here, then, is a “top ten” list of 2016 tropical cyclone events of the type we should expect to see more of due to global warming.

Examples of the strongest storms getting stronger

Tropical cyclones are heat engines which extract heat energy from the oceans and convert it to the kinetic energy of the storms' winds. Thus, the strongest tropical cyclones are expected to get stronger in a world with warmer oceans. It was not a surprise that in 2016—a year with the warmest ocean temperatures on record, globally—we saw the strongest storms ever observed in the two of the six ocean basins that tropical cyclones commonly occur in. If we include the Northern Hemisphere’s strongest tropical cyclone on record—Hurricane Patrica of October 2015—records have been set in three of the six ocean basins over the past two years. The two all-time record storms in 2016 were Tropical Cyclone Winston in the South Pacific (180 mph winds, tied for strongest Southern Hemisphere storm on record) and Tropical Cyclone Fantala in the South Indian Ocean (175 mph winds.) This year also saw seven Category 5 storms, which was the fifth greatest on record (since 1990.)

Two of the Top Five Landfalling Tropical Cyclones Occurred in 2016

In addition, 2016 also saw two of the top five strongest landfalling tropical cyclones ever recorded—Super Typhoon Meranti with 190 mph winds on the Philippines’ Itbayat Island (tied for Earth’s strongest landfall on record), and Tropical Cyclone Winston with 180 mph winds at landfall in Fiji (the 5th strongest tropical cyclone at landfall in recorded history.) As we blogged about in August, landfalling typhoons have become more intense since late 1970s, with the peak winds of typhoons striking the region increasing by 12 - 15% since 1977. “The projected ocean surface warming pattern under increasing greenhouse gas forcing suggests that typhoons striking eastern mainland China, Taiwan, Korea, and Japan will intensify further,” wrote the authors of the study we blogged about. “Given disproportionate damages by intense typhoons, this represents a heightened threat to people and properties in the region.”

Read more at The 360-Degree Rainbow

Examples of the strongest storms getting stronger

Tropical cyclones are heat engines which extract heat energy from the oceans and convert it to the kinetic energy of the storms' winds. Thus, the strongest tropical cyclones are expected to get stronger in a world with warmer oceans. It was not a surprise that in 2016—a year with the warmest ocean temperatures on record, globally—we saw the strongest storms ever observed in the two of the six ocean basins that tropical cyclones commonly occur in. If we include the Northern Hemisphere’s strongest tropical cyclone on record—Hurricane Patrica of October 2015—records have been set in three of the six ocean basins over the past two years. The two all-time record storms in 2016 were Tropical Cyclone Winston in the South Pacific (180 mph winds, tied for strongest Southern Hemisphere storm on record) and Tropical Cyclone Fantala in the South Indian Ocean (175 mph winds.) This year also saw seven Category 5 storms, which was the fifth greatest on record (since 1990.)

Two of the Top Five Landfalling Tropical Cyclones Occurred in 2016

In addition, 2016 also saw two of the top five strongest landfalling tropical cyclones ever recorded—Super Typhoon Meranti with 190 mph winds on the Philippines’ Itbayat Island (tied for Earth’s strongest landfall on record), and Tropical Cyclone Winston with 180 mph winds at landfall in Fiji (the 5th strongest tropical cyclone at landfall in recorded history.) As we blogged about in August, landfalling typhoons have become more intense since late 1970s, with the peak winds of typhoons striking the region increasing by 12 - 15% since 1977. “The projected ocean surface warming pattern under increasing greenhouse gas forcing suggests that typhoons striking eastern mainland China, Taiwan, Korea, and Japan will intensify further,” wrote the authors of the study we blogged about. “Given disproportionate damages by intense typhoons, this represents a heightened threat to people and properties in the region.”

Read more at The 360-Degree Rainbow

Wednesday, December 28, 2016

Solar Farms Expected to Outpace Natural Gas in U.S.

2016 is shaping up to be a milestone year for energy, and when the final accounting is done, one of the biggest winners is likely to be solar power.

For the first time, more electricity-generating capacity from solar power plants is expected to have been built in the U.S. than from natural gas and wind, U.S. Department of Energy data show.

Though the final tally won’t be in until March, enough new solar power plants were expected to be built in 2016 to total 9.5 gigawatts of solar power generating capacity, tripling the new solar capacity built in 2015. That’s enough to light up more than 1.8 million homes.

The solar farms built in 2016 were expected to exceed the 8 gigawatts of natural gas power generating capacity and the 6.8 gigawatts of wind power slated for construction this year. No new coal-fired power plants were planned in 2016.

Though the final tally won’t be in until March, enough new solar power plants were expected to be built in 2016 to total 9.5 gigawatts of solar power generating capacity, tripling the new solar capacity built in 2015. That’s enough to light up more than 1.8 million homes.

The solar farms built in 2016 were expected to exceed the 8 gigawatts of natural gas power generating capacity and the 6.8 gigawatts of wind power slated for construction this year. No new coal-fired power plants were planned in 2016.

Read more at Solar Farms Expected to Outpace Natural Gas in U.S.

For the first time, more electricity-generating capacity from solar power plants is expected to have been built in the U.S. than from natural gas and wind, U.S. Department of Energy data show.

Though the final tally won’t be in until March, enough new solar power plants were expected to be built in 2016 to total 9.5 gigawatts of solar power generating capacity, tripling the new solar capacity built in 2015. That’s enough to light up more than 1.8 million homes.

The solar farms built in 2016 were expected to exceed the 8 gigawatts of natural gas power generating capacity and the 6.8 gigawatts of wind power slated for construction this year. No new coal-fired power plants were planned in 2016.

Though the final tally won’t be in until March, enough new solar power plants were expected to be built in 2016 to total 9.5 gigawatts of solar power generating capacity, tripling the new solar capacity built in 2015. That’s enough to light up more than 1.8 million homes.

The solar farms built in 2016 were expected to exceed the 8 gigawatts of natural gas power generating capacity and the 6.8 gigawatts of wind power slated for construction this year. No new coal-fired power plants were planned in 2016.

Read more at Solar Farms Expected to Outpace Natural Gas in U.S.

Tuesday, December 27, 2016

Rex Tillerson Supposedly Shifted Exxon Mobil’s Climate Position. Except He Really Didn’t.

The oil giant’s transformation on global warming was more rhetorical than anything else.

For decades, Exxon had funded far-right think tanks that seeded doubt over the scientific consensus on climate change. Under Tillerson’s predecessor, Lee Raymond, the company had aligned itself heavily with Republicans, funneling 95 percent of its political donations to the party from 2000 to 2004, according to Steve Coll’s 2012 book, Private Empire: ExxonMobil and American Power. Raymond took an aggressive stance against climate science: “It is highly unlikely that the temperature in the middle of the next century will be affected whether policies are enacted now or 20 years from now,” he said in a 1997 speech.

Tillerson and Ken Cohen, Exxon’s PR chief and chair of its political action committee, wanted to broaden the company’s political reach. One step was changing their messaging about climate change, moving away from the denial the company had been attacked for supporting. The Blue Ridge retreat was part of that effort, and brought together more than a dozen guests, which according to Coll’s account, included “two senior energy-policy analysts from the Brookings Institution, a human rights activist at Freedom House, climate specialists, business ethics professors, socially responsible investors, and religious activists.”

As Coll wrote, “Tillerson’s own views about climate science were not greatly different from Lee Raymond’s,” though Tillerson “did not claim or wish to project the same sort of independent scientific expertise that Raymond had offered about climate science.” But Tillerson did see that the company needed to reposition itself.

“All they were saying they were going to do is simply acknowledge that it might exist, as opposed to saying it does not exist,” Jennifer Bremer, an economic development consultant who attended the meeting as a guest, told The Huffington Post. Bremer said Exxon executives had called the retreat so the attendees could give them some advice, not so they could dictate the company’s new policy.

“You don’t just bring in a couple of hippies and start discussing internal deliberations,” Bremer added. “Not if you’re Exxon.”

...

The company announced its first big investment in biofuels two years later, vowing to spend $600 million on research into algae-based transportation fuels. In 2015, the firm backed the historic climate agreement reached in Paris, a stance it reiterated four days before this year’s election.

Tillerson, who President-elect Donald Trump has now nominated as his secretary of state, has gotten a lot of credit for Exxon’s shift away from climate denial. But even as Exxon learned to talk the talk, the $378 billion company has failed to walk the walk.

Tillerson “brought a more clever approach, a more PR-savvy approach, to climate at Exxon, but the company really didn’t change its stripes much,” said Kert Davies, who leads the Climate Investigations Center and was the creator of ExxonSecrets, a Greenpeace program tracking the company’s climate denial. “They managed to drop this campaign of using surrogates, scale that back enough that it took the pressure off.”

In June 2009, the House passed a bill to set up a cap-and-trade system limiting carbon emissions. But that legislation failed to gain traction in the Senate. Exxon undertook an aggressive lobbying campaign that year, spending $27.4 million ― more than the entire environmental lobby combined, according to the nonpartisan Center for Responsive Politics. The American Petroleum Institute ― of which Exxon Mobil is a member ― launched its own PR campaign against the legislation.

...

But even as recently as last year, Exxon continued to fund organizations that deny or downplay climate science, and proposed solutions to global warming. In 2015 the company spent nearly $2 million on more than a dozen such think tanks and advocacy groups, according to data compiled by Greenpeace and vetted by the Union of Concerned Scientists. That list ranges from the U.S. Chamber of Commerce, the country’s largest industry association, to more radical groups, such as The Federalist Society for Law and Public Policy Studies, which has called global warming “nothing more than an educated guess,” and the Mountain States Legal Fund, which once described itself as the “litigation arm” of an “anti-environmental” movement.

And unlike many of its industry rivals, Exxon has failed to invest seriously in renewable energy. The company ranked below all of its U.S. and European competitors on reducing emissions and issuing corporate guidance on the risk posed by climate change, according to a November report from the British shareholder advocacy group CDP.

Tillerson has openly mocked the clean-energy industry for years, joking once that he wasn’t “really against renewables” because wind turbine operators bought Exxon’s oil as a lubricant. “The more windmills are built, the more oil we sell,” he said. At a May 2015 shareholders meeting, Tillerson said he hadn’t invested in renewables because “we choose not to lose money.”

...

“Tillerson is using half-truths to cover up decades of lies,” Jamie Henn, a spokesman for the environmental group 350.org, told HuffPost. “Saying climate change is real doesn’t absolve you from continuing to fund climate denial. It shouldn’t surprise anyone that the world’s largest oil company is good at greenwashing ― they spend more money on it than they do on renewable energy development.”

Read more at Rex Tillerson Supposedly Shifted Exxon Mobil’s Climate Position. Except He Really Didn’t.

For decades, Exxon had funded far-right think tanks that seeded doubt over the scientific consensus on climate change. Under Tillerson’s predecessor, Lee Raymond, the company had aligned itself heavily with Republicans, funneling 95 percent of its political donations to the party from 2000 to 2004, according to Steve Coll’s 2012 book, Private Empire: ExxonMobil and American Power. Raymond took an aggressive stance against climate science: “It is highly unlikely that the temperature in the middle of the next century will be affected whether policies are enacted now or 20 years from now,” he said in a 1997 speech.

Tillerson and Ken Cohen, Exxon’s PR chief and chair of its political action committee, wanted to broaden the company’s political reach. One step was changing their messaging about climate change, moving away from the denial the company had been attacked for supporting. The Blue Ridge retreat was part of that effort, and brought together more than a dozen guests, which according to Coll’s account, included “two senior energy-policy analysts from the Brookings Institution, a human rights activist at Freedom House, climate specialists, business ethics professors, socially responsible investors, and religious activists.”

As Coll wrote, “Tillerson’s own views about climate science were not greatly different from Lee Raymond’s,” though Tillerson “did not claim or wish to project the same sort of independent scientific expertise that Raymond had offered about climate science.” But Tillerson did see that the company needed to reposition itself.

“All they were saying they were going to do is simply acknowledge that it might exist, as opposed to saying it does not exist,” Jennifer Bremer, an economic development consultant who attended the meeting as a guest, told The Huffington Post. Bremer said Exxon executives had called the retreat so the attendees could give them some advice, not so they could dictate the company’s new policy.

“You don’t just bring in a couple of hippies and start discussing internal deliberations,” Bremer added. “Not if you’re Exxon.”

...

The company announced its first big investment in biofuels two years later, vowing to spend $600 million on research into algae-based transportation fuels. In 2015, the firm backed the historic climate agreement reached in Paris, a stance it reiterated four days before this year’s election.

Tillerson, who President-elect Donald Trump has now nominated as his secretary of state, has gotten a lot of credit for Exxon’s shift away from climate denial. But even as Exxon learned to talk the talk, the $378 billion company has failed to walk the walk.

Tillerson “brought a more clever approach, a more PR-savvy approach, to climate at Exxon, but the company really didn’t change its stripes much,” said Kert Davies, who leads the Climate Investigations Center and was the creator of ExxonSecrets, a Greenpeace program tracking the company’s climate denial. “They managed to drop this campaign of using surrogates, scale that back enough that it took the pressure off.”

In June 2009, the House passed a bill to set up a cap-and-trade system limiting carbon emissions. But that legislation failed to gain traction in the Senate. Exxon undertook an aggressive lobbying campaign that year, spending $27.4 million ― more than the entire environmental lobby combined, according to the nonpartisan Center for Responsive Politics. The American Petroleum Institute ― of which Exxon Mobil is a member ― launched its own PR campaign against the legislation.

...

But even as recently as last year, Exxon continued to fund organizations that deny or downplay climate science, and proposed solutions to global warming. In 2015 the company spent nearly $2 million on more than a dozen such think tanks and advocacy groups, according to data compiled by Greenpeace and vetted by the Union of Concerned Scientists. That list ranges from the U.S. Chamber of Commerce, the country’s largest industry association, to more radical groups, such as The Federalist Society for Law and Public Policy Studies, which has called global warming “nothing more than an educated guess,” and the Mountain States Legal Fund, which once described itself as the “litigation arm” of an “anti-environmental” movement.

And unlike many of its industry rivals, Exxon has failed to invest seriously in renewable energy. The company ranked below all of its U.S. and European competitors on reducing emissions and issuing corporate guidance on the risk posed by climate change, according to a November report from the British shareholder advocacy group CDP.

Tillerson has openly mocked the clean-energy industry for years, joking once that he wasn’t “really against renewables” because wind turbine operators bought Exxon’s oil as a lubricant. “The more windmills are built, the more oil we sell,” he said. At a May 2015 shareholders meeting, Tillerson said he hadn’t invested in renewables because “we choose not to lose money.”

...

“Tillerson is using half-truths to cover up decades of lies,” Jamie Henn, a spokesman for the environmental group 350.org, told HuffPost. “Saying climate change is real doesn’t absolve you from continuing to fund climate denial. It shouldn’t surprise anyone that the world’s largest oil company is good at greenwashing ― they spend more money on it than they do on renewable energy development.”

Read more at Rex Tillerson Supposedly Shifted Exxon Mobil’s Climate Position. Except He Really Didn’t.

2016: Obama's Climate Legacy Marked by Triumphs and Lost Opportunities

By relying on executive orders and regulations after his legislative majority disappeared, President Obama leaves his climate policies at risk under Donald Trump.

For all of President Barack Obama's sweeping and historic achievements on climate change, most have come in a last rush of momentum in the final years of his second term. What they overshadow is that his greatest opportunity to reshape how the U.S. deals with what he called the greatest threat to future generations may have come in his first term, and it was lost to the pull of other priorities.

Obama had Democratic majorities in Congress during his first two years in office, and failing to press for national climate legislation during that time turned into perhaps his greatest strategic miscalculation, according to climate experts and advocates.

"The first term was essentially lost territory," said Daniel Kammen, founding director of the Renewable and Appropriate Energy Laboratory at the University of California, Berkeley. "The second term was a totally different story."

Obama had promised "a new chapter in America's leadership on climate change," but when he took office, he was facing the worst economic crisis since the Great Depression. His priorities were saving major American industries, restoring faith in the economy and stemming spiraling unemployment. The Recovery Act, the bailout of the auto industry, and the Wall Street reform act, Dodd-Frank, were at the top of the agenda Obama's team pushed for, followed by health care reform.

Had the White House pushed for a comprehensive national climate plan early, it could have given Obama's climate agenda legislative backing, making it much harder for his successor to undo. A cap-and-trade bill, Waxman-Markey, based heavily on a proposal by a coalition of industry and environmental groups, had squeaked through the House in 2009. But after its Republican backers in the Senate got cold feet, Obama rallied no support behind it and it fizzled there. Obama never developed his own legislation proposal to replace it.

...

A Second, Climate-Action-Filled Term

Obama began his second term with a note of defiance against the forces aligned against climate action.

"We will respond to the threat of climate change, knowing that the failure to do so would betray our children and future generations," he said in his second inaugural address before hundreds of thousands of people, tying the climate fight to the enduring struggles for equality and justice.

He unveiled his climate initiative on a hot June day on Georgetown University's campus, literally rolling up his shirtsleeves as he spoke. "The question now is whether we will have the courage to act before it's too late," he said. "I refuse to condemn your generation and future generations to a planet that's beyond fixing."

Obama's EPA moved on in his second term to tackling truck emissions, reining in methane leaks from the oil and gas industry and updating energy efficiency standards for home appliances. Obama established 23 national monuments, more than any other president in history, including the Papahanaumokuakea National Marine Monument in Hawaii, an ocean reserve twice the size of Texas.

The U.S. delegation also led the effort to amend an international agreement to reduce the highly potent greenhouse gases used in refrigeration, hydrofluorocarbons. That one agreement will negate the equivalent of 10 years of U.S. emissions.

Obama used a "thousand small hammers" to fashion a U.S. climate policy without the help of Congress, in the words of David Victor, director of the Laboratory on International Law and Regulation at University of California, San Diego.

"Frankly, I think that's probably pretty good news, because there's going to be such an effort to roll it back," Victor said. "Having dozens of things, not all of which are going to be rolled back, is better than having one or two prime targets."

...

Obama's mixed climate legacy is reflected in statistics. U.S. carbon dioxide emissions from energy fell by 9.5 percent from 2008-2015, and in the first six months of 2016, were at their lowest level in 25 years, according to a report by the White House Council of Economic Advisors. Improving vehicle fuel economy and expansion of renewable energy is partly the reason. (The U.S. tripled its wind-generated electricity and gets 30 times as much from solar as it did in 2008.) But the move from coal to newly abundant natural gas played a major role.

Under Obama, natural gas production, flat for the decade before he took office, rose 28 percent. U.S. oil production has soared 76 percent. On Obama's watch, the United States surpassed Russia in gas production and Saudi Arabia in oil production.

Read more at 2016: Obama's Climate Legacy Marked by Triumphs and Lost Opportunities

For all of President Barack Obama's sweeping and historic achievements on climate change, most have come in a last rush of momentum in the final years of his second term. What they overshadow is that his greatest opportunity to reshape how the U.S. deals with what he called the greatest threat to future generations may have come in his first term, and it was lost to the pull of other priorities.

Obama had Democratic majorities in Congress during his first two years in office, and failing to press for national climate legislation during that time turned into perhaps his greatest strategic miscalculation, according to climate experts and advocates.

"The first term was essentially lost territory," said Daniel Kammen, founding director of the Renewable and Appropriate Energy Laboratory at the University of California, Berkeley. "The second term was a totally different story."

Obama had promised "a new chapter in America's leadership on climate change," but when he took office, he was facing the worst economic crisis since the Great Depression. His priorities were saving major American industries, restoring faith in the economy and stemming spiraling unemployment. The Recovery Act, the bailout of the auto industry, and the Wall Street reform act, Dodd-Frank, were at the top of the agenda Obama's team pushed for, followed by health care reform.

Had the White House pushed for a comprehensive national climate plan early, it could have given Obama's climate agenda legislative backing, making it much harder for his successor to undo. A cap-and-trade bill, Waxman-Markey, based heavily on a proposal by a coalition of industry and environmental groups, had squeaked through the House in 2009. But after its Republican backers in the Senate got cold feet, Obama rallied no support behind it and it fizzled there. Obama never developed his own legislation proposal to replace it.

...

A Second, Climate-Action-Filled Term

Obama began his second term with a note of defiance against the forces aligned against climate action.

"We will respond to the threat of climate change, knowing that the failure to do so would betray our children and future generations," he said in his second inaugural address before hundreds of thousands of people, tying the climate fight to the enduring struggles for equality and justice.

He unveiled his climate initiative on a hot June day on Georgetown University's campus, literally rolling up his shirtsleeves as he spoke. "The question now is whether we will have the courage to act before it's too late," he said. "I refuse to condemn your generation and future generations to a planet that's beyond fixing."

Obama's EPA moved on in his second term to tackling truck emissions, reining in methane leaks from the oil and gas industry and updating energy efficiency standards for home appliances. Obama established 23 national monuments, more than any other president in history, including the Papahanaumokuakea National Marine Monument in Hawaii, an ocean reserve twice the size of Texas.

The U.S. delegation also led the effort to amend an international agreement to reduce the highly potent greenhouse gases used in refrigeration, hydrofluorocarbons. That one agreement will negate the equivalent of 10 years of U.S. emissions.

Obama used a "thousand small hammers" to fashion a U.S. climate policy without the help of Congress, in the words of David Victor, director of the Laboratory on International Law and Regulation at University of California, San Diego.

"Frankly, I think that's probably pretty good news, because there's going to be such an effort to roll it back," Victor said. "Having dozens of things, not all of which are going to be rolled back, is better than having one or two prime targets."

...

Obama's mixed climate legacy is reflected in statistics. U.S. carbon dioxide emissions from energy fell by 9.5 percent from 2008-2015, and in the first six months of 2016, were at their lowest level in 25 years, according to a report by the White House Council of Economic Advisors. Improving vehicle fuel economy and expansion of renewable energy is partly the reason. (The U.S. tripled its wind-generated electricity and gets 30 times as much from solar as it did in 2008.) But the move from coal to newly abundant natural gas played a major role.

Under Obama, natural gas production, flat for the decade before he took office, rose 28 percent. U.S. oil production has soared 76 percent. On Obama's watch, the United States surpassed Russia in gas production and Saudi Arabia in oil production.

Read more at 2016: Obama's Climate Legacy Marked by Triumphs and Lost Opportunities

Yes, the Arctic’s Freakishly Warm Winter Is Due to Humans’ Climate Influence

For the Arctic, like the globe as a whole, 2016 has been exceptionally warm. For much of the year, Arctic temperatures have been much higher than normal, and sea ice concentrations have been at record low levels.

The Arctic’s seasonal cycle means that the lowest sea ice concentrations occur in September each year. But while September 2012 had less ice than September 2016, this year the ice coverage has not increased as expected as we moved into the northern winter. As a result, since late October, Arctic sea ice extent has been at record low levels for the time of year.

These record low sea ice levels have been associated with exceptionally high temperatures for the Arctic region. November and December (so far) have seen record warm temperatures. At the same time Siberia, and very recently North America, have experienced conditions that are slightly cooler than normal.

Extreme Arctic warmth and low ice coverage affect the migration patterns of marine mammals and have been linked with mass starvation and deaths among reindeer, as well as affecting polar bear habitats.

Given these severe ecological impacts and the potential influence of the Arctic on the climates of North America and Europe, it is important that we try to understand whether and how human-induced climate change has played a role in this event.Arctic attribution

Arctic attribution

Our World Weather Attribution group, led by Climate Central and including researchers at the University of Melbourne, the University of Oxford and the Dutch Meteorological Service (KNMI), used three different methods to assess the role of the human climate influence on record Arctic warmth over November and December.

We used forecast temperatures and heat persistence models to predict what will happen for the rest of December. But even with 10 days still to go, it is clear that November-December 2016 will certainly be record-breakingly warm for the Arctic.

Next, I investigated whether human-caused climate change has altered the likelihood of extremely warm Arctic temperatures, using state-of-the-art climate models. By comparing climate model simulations that include human influences, such as increased greenhouse gas concentrations, with ones without these human effects, we can estimate the role of climate change in this event.

...

To put it simply, the record November-December temperatures in the Arctic do not happen in the simulations that leave out human-driven climate factors. In fact, even with human effects included, the models suggest that this Arctic hot spell is a 1-in-200-year event. So this is a freak event even by the standards of today’s world, which humans have warmed by roughly 1℃ on average since pre-industrial times.

But in the future, as we continue to emit greenhouse gases and further warm the planet, events like this won’t be freaks any more. If we do not reduce our greenhouse gas emissions, we estimate that by the late 2040s this event will occur on average once every two years.

Read more at Yes, the Arctic’s Freakishly Warm Winter Is Due to Humans’ Climate Influence

The Arctic’s seasonal cycle means that the lowest sea ice concentrations occur in September each year. But while September 2012 had less ice than September 2016, this year the ice coverage has not increased as expected as we moved into the northern winter. As a result, since late October, Arctic sea ice extent has been at record low levels for the time of year.

These record low sea ice levels have been associated with exceptionally high temperatures for the Arctic region. November and December (so far) have seen record warm temperatures. At the same time Siberia, and very recently North America, have experienced conditions that are slightly cooler than normal.

Extreme Arctic warmth and low ice coverage affect the migration patterns of marine mammals and have been linked with mass starvation and deaths among reindeer, as well as affecting polar bear habitats.

Given these severe ecological impacts and the potential influence of the Arctic on the climates of North America and Europe, it is important that we try to understand whether and how human-induced climate change has played a role in this event.Arctic attribution

Arctic attribution

Our World Weather Attribution group, led by Climate Central and including researchers at the University of Melbourne, the University of Oxford and the Dutch Meteorological Service (KNMI), used three different methods to assess the role of the human climate influence on record Arctic warmth over November and December.

We used forecast temperatures and heat persistence models to predict what will happen for the rest of December. But even with 10 days still to go, it is clear that November-December 2016 will certainly be record-breakingly warm for the Arctic.

Next, I investigated whether human-caused climate change has altered the likelihood of extremely warm Arctic temperatures, using state-of-the-art climate models. By comparing climate model simulations that include human influences, such as increased greenhouse gas concentrations, with ones without these human effects, we can estimate the role of climate change in this event.

...

To put it simply, the record November-December temperatures in the Arctic do not happen in the simulations that leave out human-driven climate factors. In fact, even with human effects included, the models suggest that this Arctic hot spell is a 1-in-200-year event. So this is a freak event even by the standards of today’s world, which humans have warmed by roughly 1℃ on average since pre-industrial times.

But in the future, as we continue to emit greenhouse gases and further warm the planet, events like this won’t be freaks any more. If we do not reduce our greenhouse gas emissions, we estimate that by the late 2040s this event will occur on average once every two years.

Read more at Yes, the Arctic’s Freakishly Warm Winter Is Due to Humans’ Climate Influence

Monday, December 26, 2016

WattTime, the Tool that Tells You When to Charge Your EV to Keep It Green

Gavin McCormick’s attendance at an EcoHack in San Francisco ultimately sidetracked his doctoral studies at the University of California, Berkeley, but for a clean cause: software initiated at the 2013 event has transformed the budding economist’s nascent research into a potent tool for squeezing the cleanest performance out of power grids. Real-time algorithms from WattTime, the Berkeley-based nonprofit that McCormick cofounded and runs, are telling electric vehicle owners when to charge to minimize their carbon footprints and predicting which renewable power projects will deliver the biggest CO2 emissions reductions.

WattTime tracks swings in carbon dioxide emissions as a grid’s power supply mix shifts from minute to minute. Its intelligence, explains McCormick, comes from mining two datasets. The first is power market data. Algorithms trained on that data enable WattTime to predict what power plant will ramp up to meet new electrical demand at any moment in 106 markets across the United States.

WattTime turns that ‘marginal’ power supply prediction into an estimate of ‘marginal’ carbon impact by plumbing an underused database from EPA’s Air Markets Program. That database contains hourly records of pollution and fuel consumption for every U.S. power plant. “It’s been around for about 40 years and no one was paying attention to it,” says McCormick.

The resulting estimate of CO2 per kilowatt-hour can be strikingly different from a grid’s average emissions intensity—the most commonly used metric for evaluating power consumption. A grid delivering 90 percent nuclear or renewable power, for example, will have very low average emissions. But WattTime can spot when such a grid is ramping up a carbon-spewing coal plant to meet additional demand, and thus has very high marginal intensity.

WattTime is partnering with manufacturers to empower consumers to make cleaner choices. For example, a line of EV chargers from San Carlos, Calif.–based Electric Motor Werks communicates with WattTime when a car is plugged into the charger and then schedules charging to deliver the greenest fill possible.

eMotorWerks claims its JuiceBox Green can cut CO2 emissions by up to 60 percent relative to conventional EV chargers. In fact, analysis by WattTime suggests that chargers designed to optimize for price, rather than emissions, can actually increase carbon emissions (see graph).

Canadian smart thermostat provider Energate, meanwhile, is looping in WattTime’s intelligence to time electric heating and air conditioning for minimum carbon emissions. A pilot of the devices is underway in Chicago. Two more partnerships are in place and “many others are kicking the tires” says McCormick, including energy storage system firm Advanced Microgrid Solutions.

These devices turn the tables on power supply, enabling consumers to essentially game the grid and help keep the highest carbon power sources from ramping up—or even from turning on in the first place. WattTime guarantees at least a 5 percent reduction in emissions. But McCormick says it can deliver up to a 100 percent reduction in some locations such as Hawaii, where curtailed solar power is often the marginal power supply.

WattTime’s next big play is targeting the installation of clean energy. Their algorithms can predict where deploying solar and wind farms to regions are likely to displace the dirtiest fossil fuelled power plants.

WattTime is already providing such advice to corporations investing in renewable power, via the Rocky Mountain Institute, a Boulder, Colo.–based energy think tank. However, McCormick says dramatically shifting investment trends will require an overhaul of carbon accounting schemes, which mostly certify carbon reductions based on grid averages.

Read more at WattTime, the Tool that Tells You When to Charge Your EV to Keep It Green

WattTime tracks swings in carbon dioxide emissions as a grid’s power supply mix shifts from minute to minute. Its intelligence, explains McCormick, comes from mining two datasets. The first is power market data. Algorithms trained on that data enable WattTime to predict what power plant will ramp up to meet new electrical demand at any moment in 106 markets across the United States.

WattTime turns that ‘marginal’ power supply prediction into an estimate of ‘marginal’ carbon impact by plumbing an underused database from EPA’s Air Markets Program. That database contains hourly records of pollution and fuel consumption for every U.S. power plant. “It’s been around for about 40 years and no one was paying attention to it,” says McCormick.

The resulting estimate of CO2 per kilowatt-hour can be strikingly different from a grid’s average emissions intensity—the most commonly used metric for evaluating power consumption. A grid delivering 90 percent nuclear or renewable power, for example, will have very low average emissions. But WattTime can spot when such a grid is ramping up a carbon-spewing coal plant to meet additional demand, and thus has very high marginal intensity.

WattTime is partnering with manufacturers to empower consumers to make cleaner choices. For example, a line of EV chargers from San Carlos, Calif.–based Electric Motor Werks communicates with WattTime when a car is plugged into the charger and then schedules charging to deliver the greenest fill possible.

eMotorWerks claims its JuiceBox Green can cut CO2 emissions by up to 60 percent relative to conventional EV chargers. In fact, analysis by WattTime suggests that chargers designed to optimize for price, rather than emissions, can actually increase carbon emissions (see graph).

Canadian smart thermostat provider Energate, meanwhile, is looping in WattTime’s intelligence to time electric heating and air conditioning for minimum carbon emissions. A pilot of the devices is underway in Chicago. Two more partnerships are in place and “many others are kicking the tires” says McCormick, including energy storage system firm Advanced Microgrid Solutions.

These devices turn the tables on power supply, enabling consumers to essentially game the grid and help keep the highest carbon power sources from ramping up—or even from turning on in the first place. WattTime guarantees at least a 5 percent reduction in emissions. But McCormick says it can deliver up to a 100 percent reduction in some locations such as Hawaii, where curtailed solar power is often the marginal power supply.

WattTime’s next big play is targeting the installation of clean energy. Their algorithms can predict where deploying solar and wind farms to regions are likely to displace the dirtiest fossil fuelled power plants.

WattTime is already providing such advice to corporations investing in renewable power, via the Rocky Mountain Institute, a Boulder, Colo.–based energy think tank. However, McCormick says dramatically shifting investment trends will require an overhaul of carbon accounting schemes, which mostly certify carbon reductions based on grid averages.

Read more at WattTime, the Tool that Tells You When to Charge Your EV to Keep It Green

Russia Sees South Africa as a Potentially Lucrative Market for Nuclear Power Plants

Rosatom, the Russian state-owned conglomerate that is building nuclear power plants in Russia and around the world, has confirmed that it is closely monitoring the public discussion that is taking place in South Africa over the newly released integrated energy plan.

South Africa’s draft energy plan isn’t being presented to the public as a fait accompli. Instead, the draft provides information about several likely scenarios, all of which would include the 9.6 GWe of new nuclear plant capacity described in the 2010 version of the plan. There is some variation in the timing for the completion of the first new units depending on selected strategies for optimizing costs and balancing completion delays against the increased quantities of pollutants that would be emitted by delaying the introduction of new nuclear.

In the base case, the first new nuclear plant would be starting by 2026 with the first tranche of a continuing construction program being completed by the early to mid 2030s. In that base case, nuclear energy would provide 29.5 GWe by 2050. All of the capacity would have to come from plants that are not yet built. South Africa’s only operating nuclear units were completed in the second half of the 1980s and are not expected to still be operating in 2050.

There is a scenario in the plan called a “nuclear relaxed” case that allows a delay in the currently proposed building plan based on the slower than expected growth in electricity demand. This case was considered available for study because, unlike the rest of the capacity additions identified in the 2010 plan, there are not yet any signed commitments to begin construction.

If this scenario becomes the selected plan, the delays will be limited. Even in the most pessimistic assumptions of demand growth, new nuclear will need to begin coming on line by 2030 instead of 2026 so that there is not a shortage of generation capability. The plan acknowledges that the delay doesn’t provide much additional time, given the long lead times required to plan and construction nuclear power plants.

In the nuclear relaxed case, the plan acknowledges that certain climate and air pollution targets will be missed as a result of continuing to rely on dirtier power sources for a longer period of time.

The plan authors worked hard to provide the public with information about costs, employment, pollution, fossil fuel dependency, water usage and, crucially in a country with historical development inequalities, access to reliable electricity.

Read more at Russia Sees South Africa as a Potentially Lucrative Market for Nuclear Power Plants

South Africa’s draft energy plan isn’t being presented to the public as a fait accompli. Instead, the draft provides information about several likely scenarios, all of which would include the 9.6 GWe of new nuclear plant capacity described in the 2010 version of the plan. There is some variation in the timing for the completion of the first new units depending on selected strategies for optimizing costs and balancing completion delays against the increased quantities of pollutants that would be emitted by delaying the introduction of new nuclear.

In the base case, the first new nuclear plant would be starting by 2026 with the first tranche of a continuing construction program being completed by the early to mid 2030s. In that base case, nuclear energy would provide 29.5 GWe by 2050. All of the capacity would have to come from plants that are not yet built. South Africa’s only operating nuclear units were completed in the second half of the 1980s and are not expected to still be operating in 2050.

There is a scenario in the plan called a “nuclear relaxed” case that allows a delay in the currently proposed building plan based on the slower than expected growth in electricity demand. This case was considered available for study because, unlike the rest of the capacity additions identified in the 2010 plan, there are not yet any signed commitments to begin construction.

If this scenario becomes the selected plan, the delays will be limited. Even in the most pessimistic assumptions of demand growth, new nuclear will need to begin coming on line by 2030 instead of 2026 so that there is not a shortage of generation capability. The plan acknowledges that the delay doesn’t provide much additional time, given the long lead times required to plan and construction nuclear power plants.

In the nuclear relaxed case, the plan acknowledges that certain climate and air pollution targets will be missed as a result of continuing to rely on dirtier power sources for a longer period of time.

The plan authors worked hard to provide the public with information about costs, employment, pollution, fossil fuel dependency, water usage and, crucially in a country with historical development inequalities, access to reliable electricity.

Read more at Russia Sees South Africa as a Potentially Lucrative Market for Nuclear Power Plants

Online Calculator Cuts Farms’ Greenhouse Emissions

An internet tool is now available which helps to quantify and control farms’ greenhouse emissions released during the crop production cycle.

It’s called the Cool Farm Tool (CFT) – an easy-to-use online calculator which helps farmers monitor their emissions of greenhouse gases.

Agriculture accounts for about 15% of total global greenhouse gas emissions, though when fertiliser manufacture and use and the overall food processing sector are included in calculations, that figure is considerably higher.

The land can also act as a vital carbon sink, soaking up or sequestering vast amounts of carbon: when soils are disturbed the carbon is released, adding to greenhouse gases in the atmosphere.

The CFT was initially developed by researchers at the University of Aberdeen in the UK in partnership with Unilever and the Sustainable Food Lab.

Now managed by a group including academics and food manufacturers called the Cool Farm Alliance, the CFT is free for farmers to download.

Various details, including the crops being planted, soil types and pH levels (the relative acidity or alkalinity of the land), are entered into a series of boxes.

Moisture levels, amounts and types of fertiliser used and general management details are also entered, along with information on quantities of diesel and electricity used in the cultivation and storage of crops and the fuel needed to transport goods on and off the farm.

Halving emissions

In 2010 PepsiCo, the drinks and food conglomerate, launched a programme aimed at making its operations more environmentally friendly.

In particular it sought to halve the amount of greenhouse gas emissions and water use arising from production at its Walkers Crisps factory at Leicester in the UK – the largest such plant in the world, producing five million packets of crisps (known as potato chips in the US) every day.

A central part of the PepsiCo project involved encouraging its potato suppliers to farm more sustainably through the use of the CFT and by using other devices to monitor and cut back on water use. New potato varieties with improved yields were also introduced.

Within six years, the goal of halving carbon emissions and achieving a 50% reduction in water use was reached.

Read more at Online Calculator Cuts Farms’ Greenhouse Emissions

It’s called the Cool Farm Tool (CFT) – an easy-to-use online calculator which helps farmers monitor their emissions of greenhouse gases.

Agriculture accounts for about 15% of total global greenhouse gas emissions, though when fertiliser manufacture and use and the overall food processing sector are included in calculations, that figure is considerably higher.

The land can also act as a vital carbon sink, soaking up or sequestering vast amounts of carbon: when soils are disturbed the carbon is released, adding to greenhouse gases in the atmosphere.

The CFT was initially developed by researchers at the University of Aberdeen in the UK in partnership with Unilever and the Sustainable Food Lab.

Now managed by a group including academics and food manufacturers called the Cool Farm Alliance, the CFT is free for farmers to download.

Various details, including the crops being planted, soil types and pH levels (the relative acidity or alkalinity of the land), are entered into a series of boxes.

Moisture levels, amounts and types of fertiliser used and general management details are also entered, along with information on quantities of diesel and electricity used in the cultivation and storage of crops and the fuel needed to transport goods on and off the farm.

Halving emissions

In 2010 PepsiCo, the drinks and food conglomerate, launched a programme aimed at making its operations more environmentally friendly.

In particular it sought to halve the amount of greenhouse gas emissions and water use arising from production at its Walkers Crisps factory at Leicester in the UK – the largest such plant in the world, producing five million packets of crisps (known as potato chips in the US) every day.

A central part of the PepsiCo project involved encouraging its potato suppliers to farm more sustainably through the use of the CFT and by using other devices to monitor and cut back on water use. New potato varieties with improved yields were also introduced.

Within six years, the goal of halving carbon emissions and achieving a 50% reduction in water use was reached.

Read more at Online Calculator Cuts Farms’ Greenhouse Emissions

Sunday, December 25, 2016

Fast Reactors Are Alive and Kicking

Fast breeder reactors have already been successfully developed in Russia and they will become successful outside of Russia too if policymakers and investors decide to make them a priority, writes Ian Hore-Lacy, Senior Research Analyst at the World Nuclear Association.

Forged ahead

Though several countries have stated vague objectives about a likely high number of fast reactors by mid-century, Russia is really the only country that has forged ahead with them. Its BN-600 at Beloyarsk has operated well, supplying electricity to the grid since 1980, and is said to have the best operating and production record of all Russia’s nuclear power units.

Its successor is the BN-800, also at Beloyarsk. This is a new more powerful FNR, which is actually the same overall size and configuration as BN-600. There are some significant improvements from BN-600 however. The first BN-800 (and probably only Russian one) is Beloyarsk-4, which started up in mid 2014 and recently went into commercial operation. Whereas several BN-800s were once envisaged, this BN-800 at Beloyarsk has become essentially a test rig for fuel, and its main purpose has become providing operating experience and technological solutions, especially regarding fuel, that will be applied to the BN-1200.

The BN-1200 fast reactor is being developed as a next step towards Generation IV types (see below), and the design was expected to be complete this year. Rosatom’s Science and Technology Council has approved the BN-1200 reactor for construction at Beloyarsk, with plant operation from about 2025. A second one is to be built at South Urals by 2030. Others are envisaged following. It is significantly different from preceding BN models, and Rosatom plans to submit the BN-1200 to the Generation IV International Forum (GIF) as a Generation IV design.

This is the only firm program of large commercial fast reactors at this stage. However, Russia is also active with smaller and more innovative FNR designs. It has experimented with several lead-cooled reactor designs, and used lead-bismuth cooling for 40 years in reactors for its seven Alfa class submarines – not very successfully but accumulating 70 reactor-years of experience.

A significant new Russian design getting away from sodium cooling is the BREST fast neutron reactor, of 300 MWe or more with lead as the primary coolant, at 540°C, and supercritical steam generators. A pilot unit is planned at Seversk, and 1200 MWe units are proposed. Interestingly, it is a lead-cooled fast reactor design that Westinghouse has flagged a real interest in.

Getting into the small modular reactor scene is Russia’s lead-bismuth fast reactor (SVBR) of about 100 MWe. This is an integral design, which can use a wide variety of fuels. The unit would be factory-made and shipped as a 4.5m diameter, 7.5m high module, then installed in a tank of water which gives passive heat removal and shielding. A power station with 16 such modules is expected to supply electricity at low cost as well as achieving inherent safety and high proliferation resistance. A new cooperation agreement with China may advance plans for this, since in contrast with other nuclear R&D there, China’s own FNR program seems stalled.

Astrid

In addition to the Russian programme, there are many other fast reactor designs around the world being investigated by governments and private enterprise, and time will tell which will succeed. Most are relatively small.

One worth mentioning is Astrid, a French project with Japanese input. Astrid is envisaged as a 600 MWe prototype of a commercial series of 1500 MWe sodium-cooled fast reactors which are likely to be deployed from about 2050 to utilise the half million tonnes of depleted uranium that France will have by then. Astrid will have high fuel burn-up, including minor actinides in the fuel elements, and its mixed oxide (MOX) fuel will be broadly similar to that in Europe’s current reactors.

Another is GE-Hitachi’s PRISM, based on a smaller US fast reactor which ran for 30 years to 1994. It is 311 MWe, a convenient size for replacing fossil fuel units, and its metallic fuel is derived from used fuel from conventional reactors. In October 2016 GEH signed an agreement with a subsidiary of Southern Nuclear Operating Company, to collaborate on licensing fast reactors including PRISM in the USA.

Read more at Fast Reactors Are Alive and Kicking

Forged ahead

Though several countries have stated vague objectives about a likely high number of fast reactors by mid-century, Russia is really the only country that has forged ahead with them. Its BN-600 at Beloyarsk has operated well, supplying electricity to the grid since 1980, and is said to have the best operating and production record of all Russia’s nuclear power units.

Its successor is the BN-800, also at Beloyarsk. This is a new more powerful FNR, which is actually the same overall size and configuration as BN-600. There are some significant improvements from BN-600 however. The first BN-800 (and probably only Russian one) is Beloyarsk-4, which started up in mid 2014 and recently went into commercial operation. Whereas several BN-800s were once envisaged, this BN-800 at Beloyarsk has become essentially a test rig for fuel, and its main purpose has become providing operating experience and technological solutions, especially regarding fuel, that will be applied to the BN-1200.

The BN-1200 fast reactor is being developed as a next step towards Generation IV types (see below), and the design was expected to be complete this year. Rosatom’s Science and Technology Council has approved the BN-1200 reactor for construction at Beloyarsk, with plant operation from about 2025. A second one is to be built at South Urals by 2030. Others are envisaged following. It is significantly different from preceding BN models, and Rosatom plans to submit the BN-1200 to the Generation IV International Forum (GIF) as a Generation IV design.

Generation IVSmall reactor